🎉 As we enter 2024, we take a moment to reflect on our achievements in the past year and to share our exciting plans for the coming year.

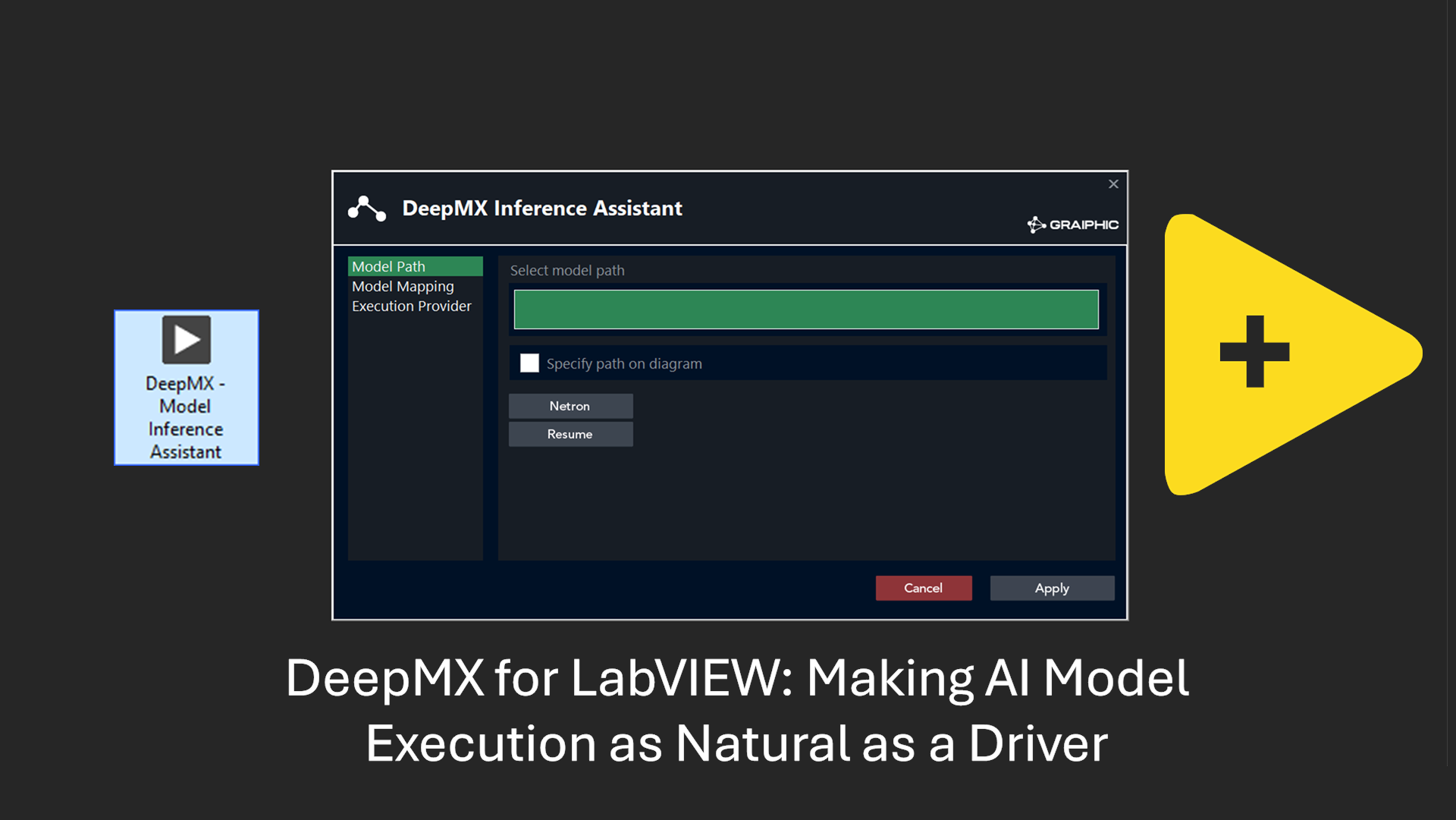

2023 was a pivotal year for us. After two years of intense development, we launched the first version of our deep learning toolkit, 𝐇𝐀𝐈𝐁𝐀𝐋, on December 12, 2022. Soon after, we introduced integration with CUDA, as well as a tailor-made hardware installation solution, fully developed in-house and operational as of December 21, 2022. These advancements marked the beginning of a development-rich 2023.

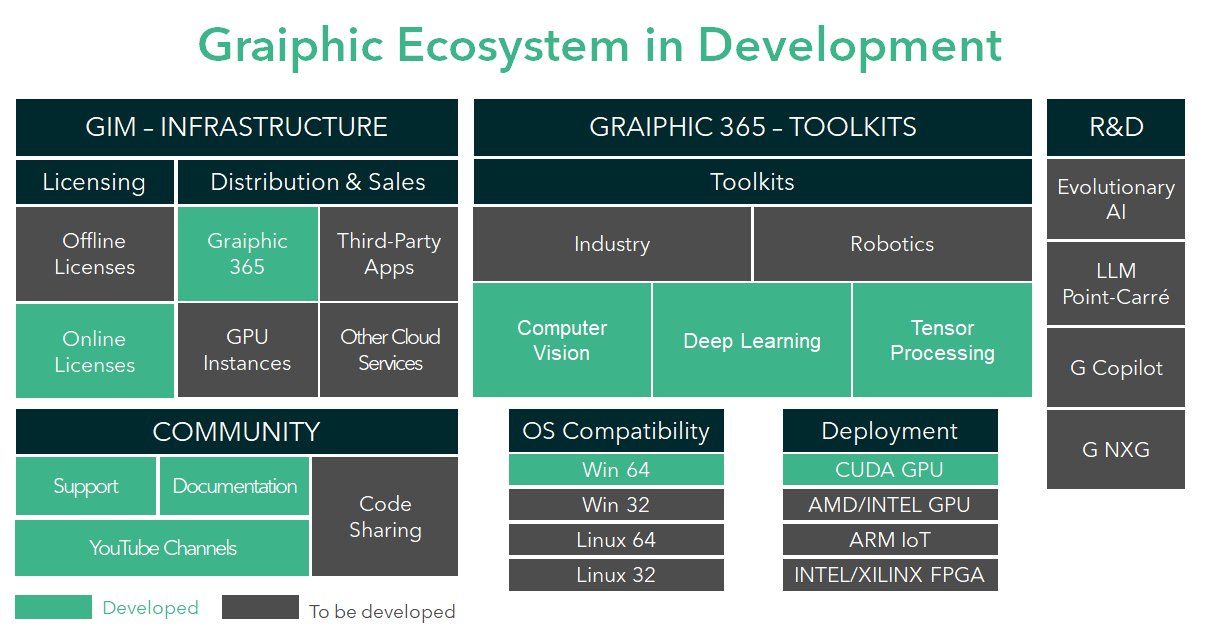

This momentum foreshadowed a 2023 full of development. Faced with significant challenges, particularly in distributing within the 𝐋𝐚𝐛𝐕𝐈𝐄𝐖 ecosystem, we took the initiative to create GIM – our “Graphic Installation and Management Toolkit”. This system enabled us to autonomously distribute our toolkits, ensuring effective code protection and a robust licensing system. Complementing our documentation, we also launched a YouTube channel to make it easier for our users to utilize our tools.

In March 2023, we tackled a new challenge: integrating computer vision into 𝐇𝐀𝐈𝐁𝐀𝐋 without using the NI vision toolkit. Our response was the launch of the TIGR Vision Toolkit, followed by the development of Perrine, our tensor computing toolkit. These three independent yet compatible tools, combined with our secure distribution and licensing solution, represent our proud and positive outcomes for 2023.

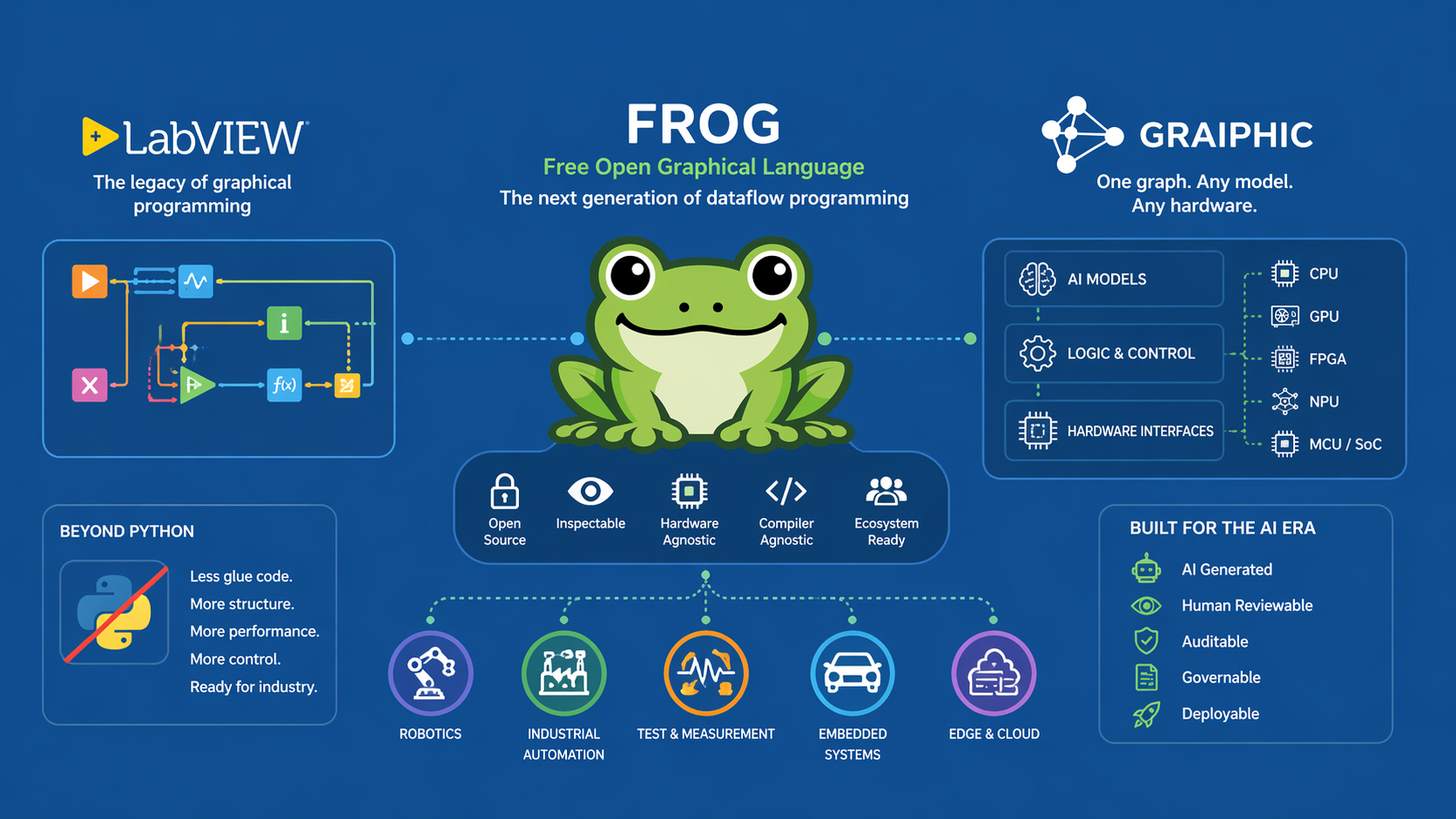

We always fantasize about reigniting the development of LabVIEW NXG. So, why not initiate the G NXG project? Let’s give ourselves the means to propose something constructive to Emerson. It’s not at all forbidden to dream!

For 2024, we are aiming even higher. GIM will evolve to include third-party editors, thus simplifying the installation of our toolkits. We will offer specific examples as Add-ons, reducing the footprint of our toolkits. Examples using several of our tools simultaneously will be available in packs, such as those for object detection in computer vision.

𝐇𝐀𝐈𝐁𝐀𝐋 will be fully optimized, maximizing GPU resource utilization and will finally integrate 𝐫𝐞𝐢𝐧𝐟𝐨𝐫𝐜𝐞𝐦𝐞𝐧𝐭 𝐥𝐞𝐚𝐫𝐧𝐢𝐧𝐠 with downloadable environment examples via GIM such as DOOM, Mario, and Starcraft. Our FIG tool will evolve to better support Pytorch models, and TIGR computer vision will be enhanced with a new annotation tool named COCO, heavily inspired by Roboflow, and will benefit from the integration of the NVIDIA VISION library.

Additionally, 2024 will see the introduction of a new toolkit dedicated to robotics and embedded systems, enabling the deployment of 𝐋𝐚𝐛𝐕𝐈𝐄𝐖 on Nvidia Jetson cards and the latest Raspberry Pi, and also facilitating communication with Pixhawk hardware (We are even exploring the possibility of offering an independent solution to deploy LabVIEW on all Xilinx FPGAs).

We are also excited to announce the launch of Graiphic’s self-generative AI project: the training of an 𝐋𝐋𝐌 𝐰𝐢𝐭𝐡 𝟓𝟓 𝐛𝐢𝐥𝐥𝐢𝐨𝐧 𝐩𝐚𝐫𝐚𝐦𝐞𝐭𝐞𝐫𝐬 (𝟏 𝐦𝐢𝐥𝐥𝐢𝐨𝐧 𝐆𝐏𝐔 𝐡𝐨𝐮𝐫𝐬 𝐨𝐟 𝐭𝐫𝐚𝐢𝐧𝐢𝐧𝐠), leading to 𝐂𝐎𝐏𝐈𝐋𝐎𝐓 𝐋𝐚𝐛𝐕𝐈𝐄𝐖.

(We will have the opportunity to discuss this further in early summer 2024)

We know these projects are ambitious, but it is your unwavering support that enables us to achieve them. The year 2024 promises to be rich in innovation and success. The entire Graiphic team wishes you a year full of success, love, and above all, surprises. Count on us to continue innovating and supporting you in your projects.