GitHub – Benchmarking LabVIEW GPU Toolkits

Whitepaper (v1.1):

Download the PDF

We’ve published a fully reproducible benchmark suite that compares the main GPU acceleration paths available to LabVIEW developers.

Everything is open—methods, VIs, and results—so you can verify, extend, or challenge our conclusions.

TL;DR

- Graph computing wins. Compiling a dataflow into an ONNX graph and running it with execution providers (TensorRT, CUDA, DirectML) beats per-node DLL calls.

- TensorRT leads across heavy workloads; CUDA EP is close behind. On small streaming tasks, CPU narrows the gap—but Graiphic remains ahead among GPU toolkits.

- DirectML is new in v1.1. It’s often slower than CUDA on NVIDIA, yet it unlocks AMD and Intel GPUs on Windows and still outperforms CPU for many tasks.

- All code is open source. Fork it, reproduce, file issues, and propose new scenarios. We’ll keep the paper and repo updated.

Why Graph Computing Outruns “DLL Switching”

Treating LabVIEW as a simple DLL toggler to call a C/CUDA routine incurs overhead on every operation: repeated boundary crossings, buffer juggling, and limited whole-graph optimization.

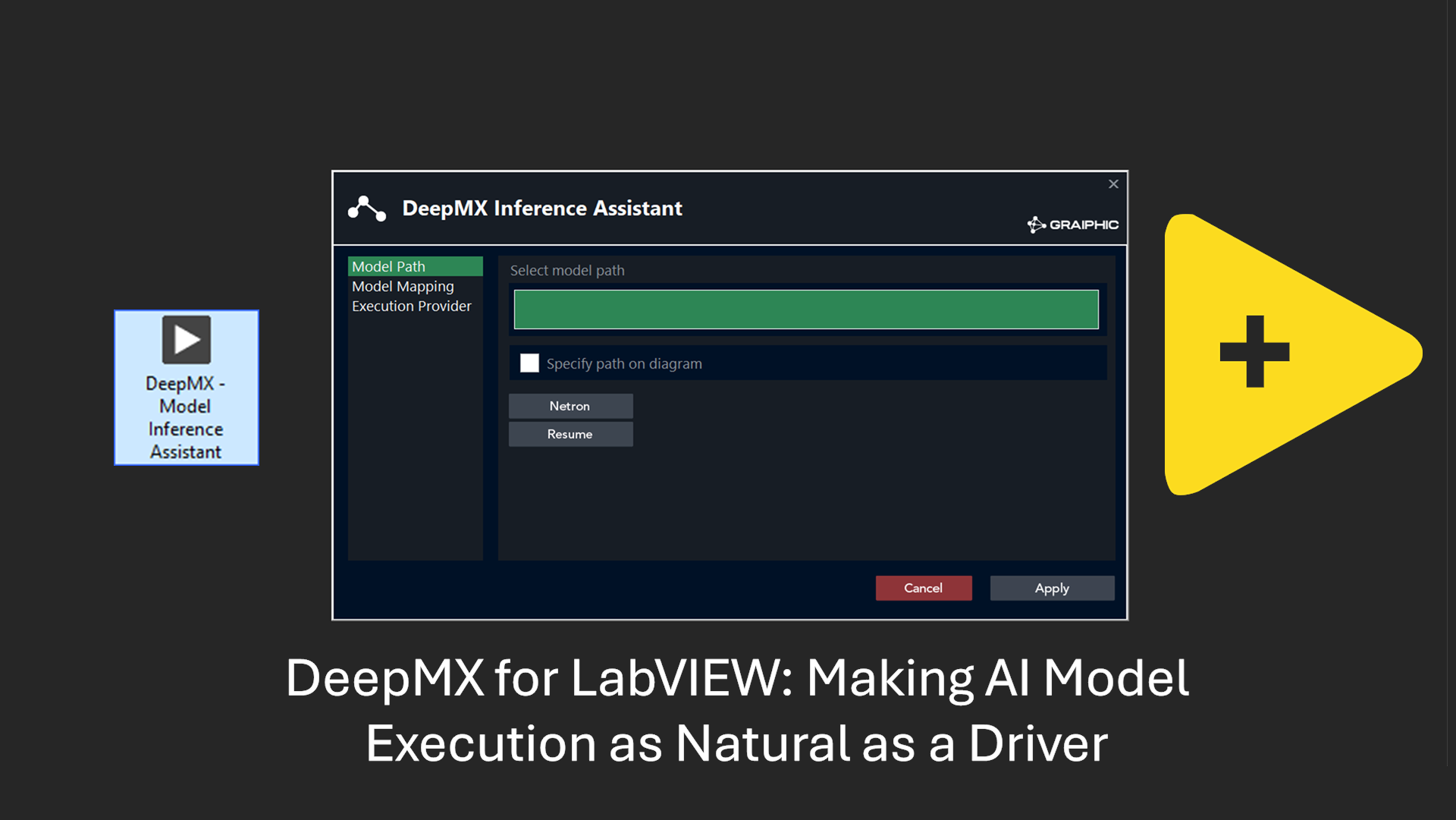

By contrast, Graiphic Accelerator compiles the diagram into a portable ONNX graph, enabling provider-level fusions, kernel scheduling, and memory reuse across the entire pipeline.

Older, ArrayFire-style libraries (e.g., “Array on Fire”) belong to a pre-graph era; they’re useful, but fundamentally constrained compared to a modern compiled-graph runtime.

Graiphic is not “just a wrapper.” We actively contribute to ONNX and ONNX Runtime, and we built a full ONNX editor with dynamic orchestration:

we turn a static model format into a schedulable, stateful graph that executes end-to-end inside LabVIEW—something unique in our ecosystem.

What We Tested

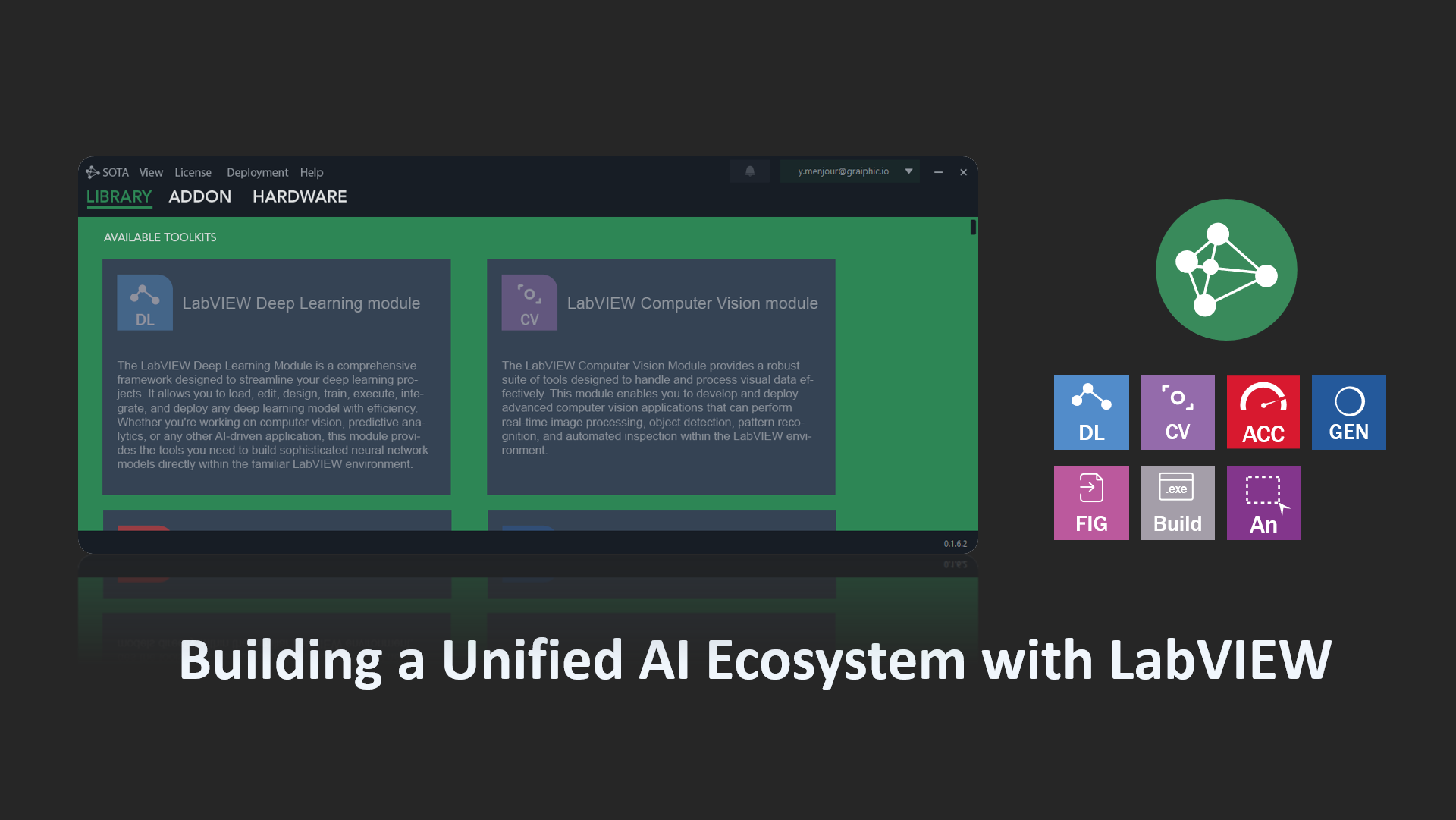

- Toolkits: Graiphic Accelerator (TensorRT / CUDA / DirectML), CuLab (CUDA), G2CPU (CUDA), plus native LabVIEW CPU.

- Runtimes: CUDA 12.9, TensorRT 10.13.3.9, DirectML 1.15.4.0.

- All resources: open VIs, datasets, and charts are in the GitHub repository (link above).

The Four Benchmarks & What They Show

1) GEMM — Canonical Throughput

Matrix multiply + light arithmetic to stress raw math throughput and memory movement.

Result: GPU toolkits beat CPU by a wide margin; within GPUs, TensorRT is fastest, then CUDA EP. CuLab and G2CPU are slower due to per-node DLL overheads.

2) Arithmetic Loop — Many Small Ops

Repeated Add/Neg/Mul/Div with reduction across growing arrays.

Result: The gap widens: TensorRT leverages fusion and scheduling for dramatic wins; CUDA EP remains solid; CuLab and G2CPU incur significant overheads.

3) Complex Numbers — Deliberately Hard

Complex datatypes aren’t yet native in ONNX. We implemented a temporary strategy by packing real/imag along an extra tensor dimension.

This is functionally correct but not optimized—and we did it on purpose.

Result: Even under this penalty, TensorRT stays ahead; CUDA EP scales predictably; DLL-style approaches degrade earlier.

Our roadmap includes upstreaming native complex support to ONNX/ORT.

4) RF Streaming — Real-World Signal Processing

A field scenario provided by NI: streaming chunks (~32k samples), FFT, log, add/div, display. We reproduced it as-is across toolkits.

Result: On small chunks, CPU stays competitive (low launch latency). Graiphic’s TensorRT and CUDA still lead among GPUs; CuLab and G2CPU show more variance.

Execution Providers: CUDA, TensorRT, and now DirectML

The Accelerator lets you select the best execution provider for each target:

- TensorRT (NVIDIA): ahead-of-time graph compilation, heavy operator fusion, and kernel scheduling for maximum throughput and determinism.

- CUDA EP (NVIDIA): highly optimized kernels with strong performance and broad operator coverage.

- DirectML (Windows, GPU-vendor agnostic): a low-level ML API built into Windows that runs on AMD and Intel GPUs as well as NVIDIA.

It often trails CUDA on pure speed but still handily beats CPU on many tasks and massively broadens deployment options on standard Windows PCs and ruggedized systems.

Practical takeaway: choose TensorRT for peak NVIDIA performance, CUDA for balance and coverage, and DirectML when portability across GPU vendors matters.

Open, Reproducible, Extensible

- Grab the VIs and data, reproduce locally, and compare results.

- Propose new tests (asynchronous pipelines, parallelism patterns, mixed precision, quantization) via GitHub issues/PRs.

- We welcome contributions from toolkit vendors and the community.

Changelog

- v1.1: Added DirectML execution provider and updated results/analysis accordingly.