For years, engineers have relied on drivers to make complex hardware accessible. You connect a device, configure a few parameters, and integrate it into your application without having to rebuild the entire low-level stack yourself. That model changed engineering workflows. It made powerful systems usable.

At Graiphic, we believe AI should work the same way.

That is exactly the idea behind DeepMX.

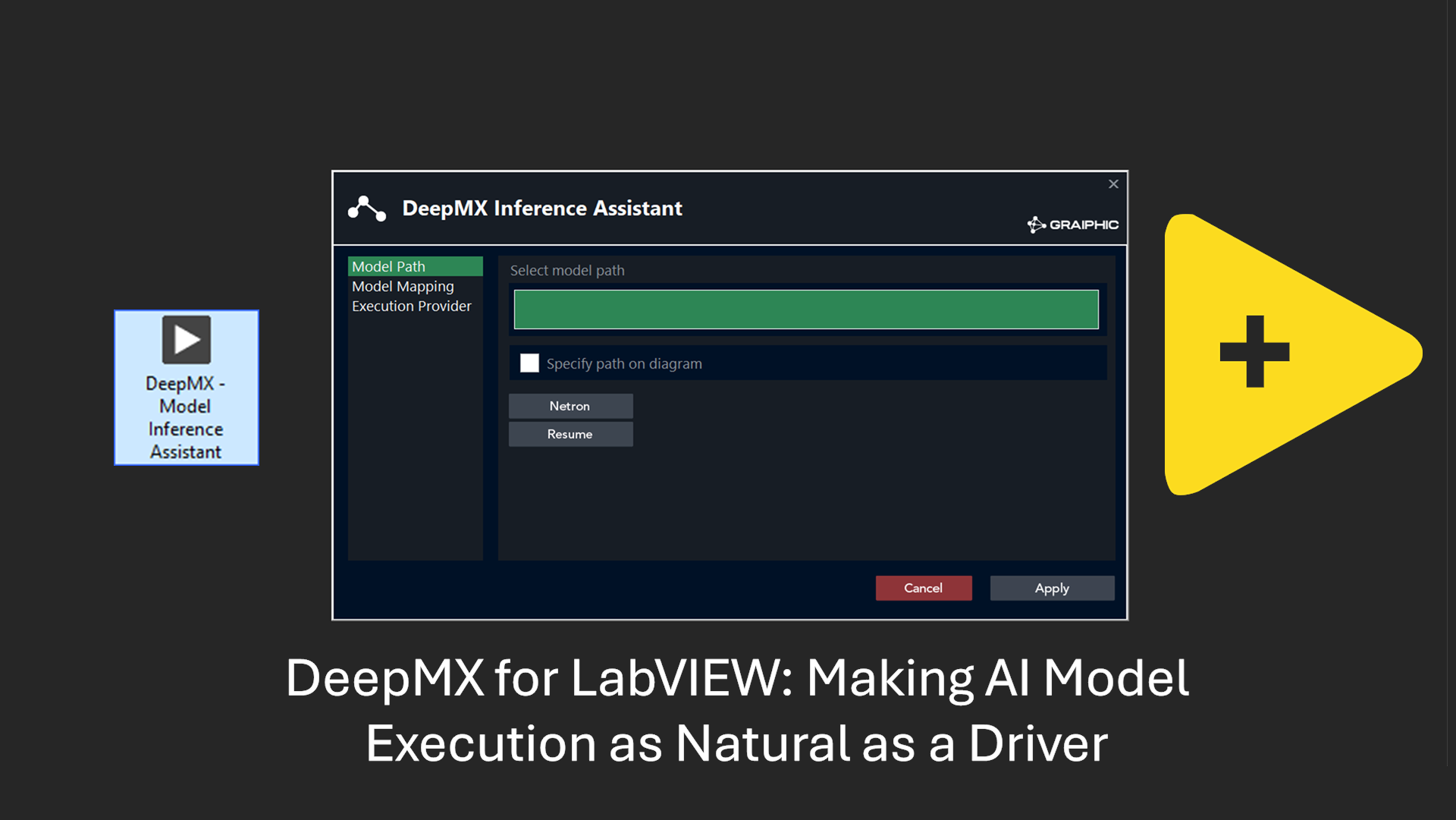

DeepMX is Graiphic’s new driver-oriented execution layer for AI models in LabVIEW. Its purpose is simple: help engineers load a model, run it efficiently on their hardware, and integrate it into their application without having to deal with runtime complexity, backend selection, or optimization details.

In other words, we want AI model execution to feel as straightforward and natural as using a driver.

Watch the first DeepMX video

Why this matters

AI is now everywhere in engineering conversations. But in real industrial workflows, adoption often remains harder than it should be.

The problem is not only the model itself. The real challenge starts after the model exists.

How do you run it efficiently?

How do you choose the right hardware backend?

How do you keep the integration clean inside an existing LabVIEW application?

How do you make it usable by engineers who want results, not infrastructure headaches?

That is where DeepMX comes in.

DeepMX acts as an abstraction layer for AI model execution. Instead of forcing users to manually manage runtimes, execution providers, and hardware-specific logic, it creates a simple, coherent entry point inside LabVIEW.

The goal is not to expose more complexity.

The goal is to remove it.

A driver mindset for AI

National Instruments built a strong legacy by making hardware easier to use. DAQ workflows became natural because the software layer made the underlying complexity manageable.

We see the next frontier in a similar way.

The future is not only about connecting sensors.

It is also about connecting models.

That is why DeepMX is not presented as just another AI utility. It is designed with a driver philosophy.

Instead of asking engineers to become runtime specialists, DeepMX lets them stay focused on what really matters:

-

building applications

-

connecting systems

-

making decisions

-

deploying useful intelligence where it creates value

This approach matters because AI in industry should not feel exotic. It should feel operational.

What DeepMX does

DeepMX is designed to execute AI models efficiently in LabVIEW while staying as accessible as possible.

Today, it is natively compatible with ONNX models, which gives users a practical and flexible way to deploy models across different environments. ONNX is an important part of this vision because it provides a portable format for model execution without locking engineers into a single framework.

DeepMX then maps that model execution onto the most relevant hardware backend depending on the target machine.

Currently available execution providers include:

-

CPU

-

DirectML

-

CUDA

-

TensorRT

-

OpenVINO

-

oneDNN

This means the same model can be executed across heterogeneous compute architectures while remaining integrated inside a LabVIEW workflow.

That is a major step forward for real-world engineering teams.

It allows users to think less about infrastructure and more about outcomes.

From model file to usable result

One of the strongest ideas behind DeepMX is simplicity of use.

The vision is clear: an engineer should be able to load a model in one click, run it on the available hardware, and integrate the result into a LabVIEW application with minimal friction.

That experience matters.

Because for many teams, the difference between a promising technology and a deployed technology is not raw performance alone. It is usability. It is integration. It is the ability to move from experimentation to operation without creating a fragile pipeline.

DeepMX is built to close that gap.

Rather than treating AI as a disconnected expert-only layer, it makes model execution feel like a native part of the application architecture.

That is how AI becomes practical.

Built for accessible industrial AI

A lot of AI communication focuses on scale, cloud, and hype.

Our direction is different.

We believe there is enormous value in local, sovereign, hardware-aware execution. Many industrial applications require control, predictability, privacy, and deployability close to the field. In those environments, a clean local execution stack is not a luxury. It is a requirement.

DeepMX is aligned with that philosophy.

It is designed for engineers who want to deploy intelligence where it is needed:

-

on workstations

-

on industrial PCs

-

on GPU-enabled systems

-

on edge devices

-

inside real applications that must run reliably

That is why execution efficiency and hardware compatibility matter so much.

This is not only about making AI possible.

It is about making AI usable in engineering reality.

More than one tool: the beginning of a stack

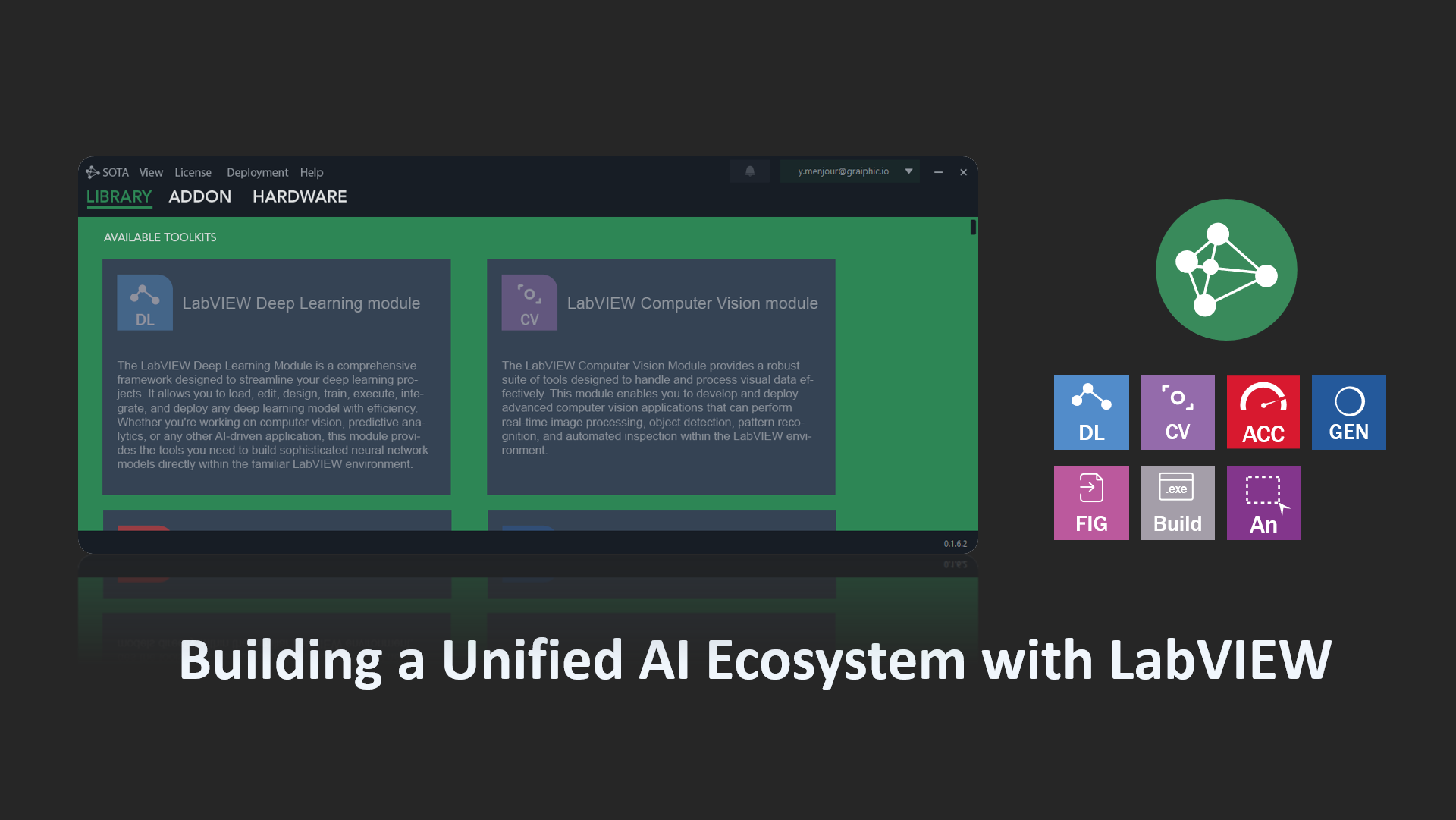

DeepMX is an important step, but it is not the whole story.

What is emerging at Graiphic is not a single isolated component. It is a broader software stack built around a clear philosophy: independent, composable execution layers for next-generation engineering applications.

DeepMX is the first visible piece in that direction:

-

DeepMX – AI model execution

-

VisionMX – vision capture and processing

-

GraphMX – execution of computational graphs and pipelines

-

GenMX – future execution layer for generative AI

Each toolkit has its own role. Each one is designed to remain useful on its own. And together, they point toward something larger: a modular software foundation for modern industrial intelligence.

We are only starting to show parts of it.

There is much more coming.

Why this approach is different

What makes this direction interesting is not just the technology. It is the architectural mindset behind it.

We do not believe engineers need more fragmented tools.

We believe they need better execution layers.

A strong stack should help users move from hardware to data, from data to models, from models to decisions, and from decisions to deployable applications.

That is why composability matters.

A vision pipeline should not feel disconnected from AI execution.

AI execution should not feel disconnected from graph orchestration.

And future generative workflows should not feel disconnected from the rest of the application world.

By thinking in terms of execution layers rather than isolated demos, we can build something much more durable.

That is where the real surprise is.

Not in one feature.

But in the stack that starts to appear when the pieces connect.

Designed to stay accessible

Even when the underlying systems are advanced, the user experience should remain approachable.

That principle is central for us.

We want engineers, developers, integrators, and technical teams to feel that these tools are understandable and usable. Powerful software should not require unnecessary opacity. If a model can run in a workflow, then it should be possible to integrate it cleanly. If hardware acceleration exists, then it should be possible to benefit from it without turning every project into a backend engineering exercise.

That is why DeepMX aims for a balance that is often missing:

-

technically serious

-

industrially relevant

-

easy to approach

-

ready to integrate

This combination is where real adoption starts.

This is only the beginning

The first DeepMX presentation is more than a product preview. It is an opening signal.

It shows a direction for LabVIEW users and for engineering teams who want AI to become a real operational capability, not just a separate experiment.

It also shows how Graiphic is thinking about the future: not as disconnected features, but as a coherent stack where model execution, vision, graph orchestration, and future AI layers can work together in a way that feels natural.

That future should be powerful.

But it should also be usable.

DeepMX is part of that answer.

And yes, more surprises are coming.

Watch, share, and contact Graiphic

If you want to see the first DeepMX demonstration, watch the video here:

DeepMX video:

https://youtu.be/Ianueozg23I

You can also explore more demonstrations on the Graiphic YouTube channel:

Graiphic YouTube channel:

https://www.youtube.com/@graiphic

If you have a project and want to discuss AI integration, industrial vision, model execution, or next-generation engineering software, contact Graiphic.

If this direction speaks to you, share the article and follow what comes next.

This is just the beginning.